-

• #2

Nice - are you trying to set up DMA using JavaScript to do SPI sends? There's a tool at https://github.com/espruino/Espruino/blob/master/scripts/build_js_hardware.js that'll generate JS for the registers in bits of hardware so you can access it more easily.

Yes, below 23 bytes it's more efficient to not use flat strings to back the arrays, so Espruino does that. Also for Uint8Array it'll try once to make a flat string, but if it doesn't succeed it'll just back it with a sparse one.

Have you seen http://www.espruino.com/Reference#l_E_getAddressOf ? You can at least run that with the second argument as true, and it'll return 0 if there isn't a flat array.

In the latest builds (post 1v95) of Espruino you'll find

E.toStringis much more reliable at creating flat strings. I'd suggest the little known hack ofE.toString({data : 0, count : NUM_BYTES}).var flat_str = E.toString({data : 0, count : NUM_BYTES}); var arr = new Uint8Array(E.toArrayBuffer(flat_str)); var addr = E.getAddressOf(flat_str);Hope that helps!

-

• #3

many thx - nice hack to get a flat Uint8Array ;)

i packed it into some handy functions extending your E instance:

// grant flat arraybuffer http://forum.espruino.com/conversations/316409/#comment14077573 if (E.newArrayBuffer===undefined) E.newArrayBuffer= function(bytes){ const mem= E.toString({data:0,count:bytes}); // undefined -> failed to alloc the *flat* string if (!mem) throw Error('alloc flat for '+bytes+' bytes FAILED!'); return E.toArrayBuffer(mem); }; if (E.newUint8Array===undefined) E.newUint8Array= function(cnt){ return new Uint8Array( E.newArrayBuffer(cnt)); }; if (E.newUint16Array===undefined) E.newUint16Array= function(cnt){ return new Uint16Array( E.newArrayBuffer(cnt*2)); }; if (E.newUint32Array===undefined) E.newUint32Array= function(cnt){ return new Uint32Array( E.newArrayBuffer(cnt*4)); };at the moment, my DMA extension for SPI supports TX only - but gives a really fine performance improvement when running at 12.5 MBaud.

-

• #4

That's awesome - and your DMA extension is all written in JS? Have you managed to make a new ILI9341 module that uses it?

-

• #5

yes, i had it all written in JS, but changed then to asm because of the high DMA setup costs in JS. In JS it took approx. 7ms, which results at eff. 5MBaud in 4375 byte. or in other words - transmissions of less than 4,4kbyte would be LESS efficient using DMA over the native SPI implementation. with asm i could reduce the time by approx. 80%, so DMA makes sense for any packet >500byte.

regarding the ILI93141 module: on the standard module only the fillrect benefits from DMA, for the ILI93141pal things are a bit better. but at the end i decided to replace both with my own ILI9341 driver, adding some pretty features such as smoothed fonts (incl. the font generator necessary to build them from any google font). if you like, i can provide it to you in the next days.

-

• #6

Wow, using http://www.espruino.com/Assembler - or actually using compiled-in C code?

http://www.espruino.com/Compilation might also be an option, since it's got some shortcuts in there to make peek and poke really quick :)

I'd be really interested in seeing what you've done - I can't promise much about pulling it in, but smooth fonts would be really neat.

-

• #7

i am using the E.asm(...). it's a great tool to get things done (even when some thumb instructions are missing;).

compilation is fine, but does not bring that boost as E.asm does - even when just using peek and poke. I implemented the same operation in 3 different ways, and called each 1000 times:

// takes 538ms ... 222% of the fastest function rclr1(addr,mask){ poke32( addr, peek32(addr) & ~mask); } // takes 334ms ... 138% of the fastest function rclr2(addr,mask){ "compiled"; poke32( addr, peek32(addr) & !mask); // ~ not supported by compiler } // takes 242ms var rclr3=E.asm("void(int,int)", "ldr r2,[r0]", "bic r2,r1", "str r2,[r0]", "bx lr" );i took a look on the compiler output - the differnces in code between rclr2 and rclr3 speak for themselves:

var rclr2=E.nativeCall(1, "JsVar(JsVar,JsVar)", atob("LenwT4ewBq1F+AgNB0Y0SCpLeESJRphHKU6CRrBHASMAkwAjGkYZRgGVJkyDRqBHgEYlTFhGoEdQRqBHQEawRyJLgkZIRphHgPABACBLwLKYRyBLAUaDRiYiUEaYRx5KA5CQRx1KkEeBRgObGEagR1hGoEdQRqBHQEagRxlIBJcOS834FJB4RJhHB0awRwIjAJMAIxpGGUYBlQpNBkaoRwVGMEagRzhGoEdIRqBHKEagRwAgB7C96PCPAL+1hgMA0aYCAPFQAgDldAUAIawCAHV2BQCLKwIAiZgCAIV2BQDSAAAAZQAAAHBlZWszMgBwb2tlMzIAAAA=")); var rclr3=E.nativeCall(1, "void(int,int)", atob("AmiKQwJgcEc="))ps: seems that the unary '~' operator has been forgotten in the compiler

-

• #8

I published the current status of the SPI DMA driver at https://github.com/andiy/espruino.git

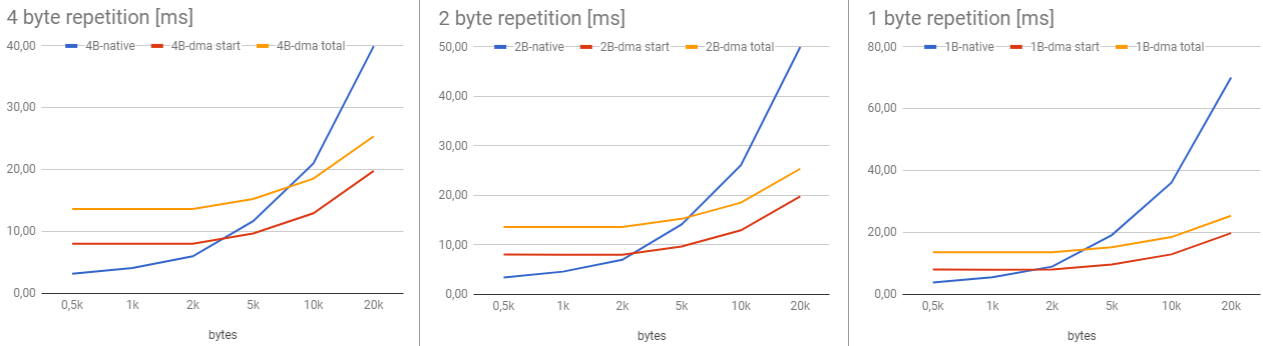

It's important to have in mind, that DMA is only of advantage when sending a minimum amount of data. Below are some benchmarks to have an idea when DMA may be of advantage.

Times [ms] for sending a data buffer of length N:

bytes | native write | writeInterlaced* | writeInterlaced$* ---------------------------------------------------------- 20k | 42.0 | 4.2 / 18.4 | 3.0 / 18.0 10k | 22.2 | 4.2 / 11.8 | 3.0 / 11.4 1k | 4.1 | 4.2 / 9.9 | 3.0 / 8.7 0.5k | 3.0 | 4.2 / 9.9 | 3.0 / 8.7 *) 1st number is the net time for calling writeInterlaced, 2nd number is the total transmission timeCONCLUSIO:

- writeInterlaced() overhauls native write() at 1k+ bytes

- writeInterlaced$() overhauls native write() at 500+ bytes

When sending a small buffer of 1, 2 or 4 byte multiple times, the results are as below.

QSPIx.writeInterlaced( buf, N); // is compared with SPIx.write({data:..,count:N});CONCLUSIO:

- 1 byte buffer: advantage for writeInterlaced at N > 1800

- 2 byte buffer: advantage for writeInterlaced at N > 3000

- 4 byte buffer: advantage for writeInterlaced at N > 3500

1 Attachment

- writeInterlaced() overhauls native write() at 1k+ bytes

-

• #9

Wow, thanks for this - that's an awesome bit of work!

As far as I know you're the first person that's used

E.asmfor serious work. Just wondering:- Which instructions did you try that weren't there -

strh/ldrh? any others I should add? - Now there's support for multiline 'templated' strings, I could support that in the IDE, which would probably make using the assembler a lot more pleasant.

Also, I just added

~to the compiler, and fixed another issue (it wasn't inlining the peek/poke because it wasn't sure the address was an integer).You initial code:

function rclr2(addr,mask){ "compiled"; poke32( addr, peek32(addr) & ~mask); }Should be much better now. However it's not perfect because the argument still comes in as a

JsVarand has to be converted in the code each time it's used.This one's very slightly better, but again not great.

function rclr2(addr,mask){ "compiled"; var a = 0|addr; poke32( a, peek32(a) & ~mask); }Honestly if you're happy with writing Assembler then that's definitely best :)

- Which instructions did you try that weren't there -

-

• #10

wow² ;) you are really speedy!

you are right - i was missing the half word operations

E.asm seems to be available in the editor window only. when used in modules, it does not work. is there an easy way to have E.asm for modules, too?

my SPI DMA driver has a very strange issue open (marked as i#2). it applies only to the writeInterlaced( buf, N) call - e.g. when repeating the buffer. in this case, the display shows just random data for the last chunk sent. when adding a dummy chunk (just 1 pixel) at the end, it works fine (but for the price, that the function has to wait until everything sent). behaviour occurs independent of SPIx, byte count, baudrate. the only thing i saw was that writing to a certain JSVar while having the DMA running in background seems to change to DMA data. but when checking the DMA it pointed definitiely not to the JSVar... very strange... in fact, i could not figure out whats the real reason. do you use the DMA in the firmware? do you have any experience with DMA in FIFO mode + repeating source data ?

-

• #11

Just a thought - You could write some assembler code that did basically what the ILI9341pal driver does, but with DMA:

- Take 4bpp (?) data a chunk at a time and unpack it into another buffer with 16 bits.

- Kick off DMA from that buffer

- Take another 4bpp (?) data chunk and unpack it into another buffer with 16 bits.

- Wait for DMA to finish

- Goto 2

Obviously you've got your current solution with the nice fonts so it's not a big deal, that that could end up being really interesting.

- Take 4bpp (?) data a chunk at a time and unpack it into another buffer with 16 bits.

-

• #12

E.asm seems to be available in the editor window only. when used in modules, it does not work.

I just tested, and you can turn on

Modules uploaded a functions (BETA)inCommunicationsunder Settings. When I do a new IDE release that'll be fixed though and it'll automatically assemble even when not doing that.I'm not sure about your DMA issues... All I've used it for is the TV output capability, and I'm pretty sure it's not used anywhere else. Not sure what to suggest really - variables don't get moved around in memory during GC so I don't think that could be an issue either.

-

• #13

i implemented something similar, but with larger chunks (1..10kBytes); sending just 16bits with DMA is very slow. it might be even better to write directly to SPI TX? maybe DMA double buffer (DBM) helps, i did not try till now. but i am not sure if 16bits are enough get rid of the inter-byte gap (due to CPU load for DMA ready scanning). think i will give it a try.

i have identified another performance brake: E.mapInPlace; i think it's use of JSVars slows down the lookup. using asm coded specialiced functions for 1/2/4bpp is about 20x faster.

-

• #14

ufff - "compiled" is damn fast now. same benchmark as before, but now compiled is even 4ms (~2%) faster than my asm function! seems you implemented some quantum technology ;)

FYI: the

a=0|addrdoes not improve anything (but when beeing faster than asm, thats ok ;) -

• #15

I just tested, and you can turn on Modules uploaded a functions (BETA) in Communications under Settings.

great - but with this option ON, "compiled" produces an error (see attachement). but don't worry, i can live without (with some less comfort). and the new lightning fast peek/poke compilation already helps pretty much.

1 Attachment

-

• #16

"compiled" is damn fast now. same benchmark as before, but now compiled is even 4ms (~2%) faster than my asm function!

Great!

but with this option ON, "compiled" produces an error

That's interesting... Are you sure it's not just that there was an issue connecting to the board that time? If you disconnect and reconnect via the IDE button then it may work

-

• #17

If you disconnect and reconnect via the IDE button then it may work

nope, even restarting the web IDE does not help --- but dis/reconnecting the Espruino solves it!

-

• #18

inlining the peek/poke

there seems to sit a little bug - this does not work:

const a = [<address>,<value>]; poke32( a[0], a[1]);this is fine:

const a = [<address>,<value>]; const x= a[0]; const y= a[1]; poke32( x,y);this is fine, too:

const a = [<address>,<value>]; poke32( a[0]|0,a[1]|0);another - similar? - flaw i have seen:

const SPI_CR2_TXDMAEN = 0x0002; function rset16( addr, mask){ "compiled"; console.log( addr.toString(16), mask.toString(16)); // debug output poke16( addr, peek16( addr) | mask); } function foo( qctl, buf_ptr, byte_cnt) { "compiled"; const SPI_CR1= ...; ... rset16( SPI_CR1+4, SPI_CR2_TXDMAEN); }generates this output: 40013004 [object Object]

expected output: 40013004 2

to achieve the expected behaviour, i have either to

- remove "compiled" directive from foo, or

- do rset16( SPI_CR1+4, SPI_CR2_TXDMAEN|0);

- remove "compiled" directive from foo, or

-

• #19

Which hardware does this work with? Don't the register addresses depend on the hardware platform? It would be cool to use this with an audio codec to record or play waveforms.

-

• #20

It's tested for the EspruinoWIFI based on STM32F411. Adaption to another board should be quite easy, as long as it is a ARM processor. I think changing the xxx_BASE constants should be all do be done.

Pleae consider, that you will prefer the double buffer mode (DBM) for an audio codec. This feature is not used in the current lib. -

• #21

- Take 4bpp (?) data a chunk at a time and unpack it into another buffer with 16 bits.

- Kick off DMA from that buffer

- Take another 4bpp (?) data chunk and unpack it into another buffer with 16 bits.

- Wait for DMA to finish

- Goto 2

As already mentioned - to do a full DMA setup per 16bit is much too slow (because the necessary procedure for setting up, starting and then stopping dma/spi). so i tried it with double buffer (DMA) feature. basically this works nice, but it has some drawbacks:

- with 16bit payload at top speed of 12.5Mbaud we have to feed new data every 1,28us to the DMA buffers; on the EspruinoWIFI (100MHz, 1-2 cycles per instruction) this counts as ~85 instructions. well, it seems that some IRQ out there (timer?) blocks my feeder for longer than that.

- increasing the buffer by 10x (e.g. 10x16 bit) works perfect (6x16 does not), but... in this case we have to waste a lot of (blocking) cpu cycles while waiting for a buffer to get free for next data.

- in fact - a fast palette lookup (i am not talking about E.mapInPlace) - saves a lot of CPU load 'cause it's much faster than the SPI. it's not my intention to waste then these savings with blocking waits for background DMA ;)

the best way of pressing paletted image data seems to me:

- unpalette graphics data (typ. 1/2/4bpp into 16bpp) chunk by chunk with a really fast lookup method

- each chunk as huge as possible (typ. 2..20 kbyte)

- this allows parallel processing of JS code while the last chunk goes over the line

some simple measurements of optimized asm 1/2/4 into 16bit lookup functions show that unpaletting is typ. 3..10 times faster than the net SPI transmission time. e.g. on 10k pixels @1bpp we bring >11ms (12.80-1.44) of cpu time back to JS compared to any blocking method.

test_map1to16 1000 pxls 0.43 1.28 2.9x test_map1to16 10000 pxls 1.44 12.80 8.9x test_map2to16 1000 pxls 0.44 1.28 2.9x test_map2to16 10000 pxls 1.56 12.80 8.2x test_map4to16 1000 pxls 0.48 1.28 2.7x test_map4to16 10000 pxls 1.87 12.80 6.8x - Take 4bpp (?) data a chunk at a time and unpack it into another buffer with 16 bits.

-

• #22

It sounds really good - yes, you'd need a biggish buffer to allow things like IRQs to get handled in the background if they need to be.

With your compiler issues:

const a = [<address>,<value>]; poke32( a[0], a[1]);should have given you the error:

Error: ArrayExpression is not implemented yetBecause defining JS arrays from compiled code isn't supported yet unfortunately. Even if it had worked, your code would have ended up being slow because it would have defined a JavaScript array type though.

With your other problem, that was definitely a compiler issue where it wasn't handling variables correctly when used as function args - that should now be fixed :)

Gordon

Gordon mrQ

mrQ

ClearMemory041063

ClearMemory041063

flat arrays are a mandatory thing when working with DMA.

but, what is the recommended way to create a flat array for sure?

this does not work for n<23, and does not reliably work and n>=23.

this generates always a flat variable, but it's a string and not a arraybuffer as needed.

furthermore i can not create a empty buffer just specifying the length.

good to know, that these two behave like new UintXArray (and not like E.toString)

in fact, this does not work either:

my workaround for the moment: create always a UintXArray >=23 byte

but this wether very elegant, nor guaranteed to work with future firmware versions.

are there any recommendations how to create a flat UintXArray, independent of it's length?

thx!